Cloud-hosted AI systems have made advanced model access simple. But as AI moves from experimentation into daily operations, a different question emerges: should every intelligent workflow depend entirely on external compute?

For many businesses, the answer will not be a full rejection of cloud AI. The more realistic future is hybrid. Cloud models remain valuable for frontier performance, elastic scale, and fast experimentation. Local compute infrastructure becomes valuable for privacy-sensitive workflows, predictable usage patterns, internal automation, model customization, and operational control.

Key findings

Strategic shift

AI compute is becoming operational infrastructure

As AI becomes embedded in workflows, compute strategy becomes part of governance, cost control, and system architecture.

Primary business driver

Control

Local infrastructure gives organizations more control over data movement, model access, internal latency, and long-term cost behavior.

Most effective model

Hybrid compute architecture

Cloud for frontier capability and burst scale, local compute for private, repeatable, and operationally sensitive workloads.

Cloud versus local is the wrong question

The strategic issue is not whether cloud or local compute wins. The issue is whether businesses understand which parts of their AI operating layer should be rented, which parts should be owned, and which parts should remain portable.

Why local compute is becoming more practical

Historically, local AI infrastructure required expensive GPU servers, specialized deployment knowledge, and heavy maintenance. That barrier is changing. Modern hardware with large unified memory pools, efficient local inference frameworks, and distributed runtimes has made smaller-scale private AI infrastructure more realistic. The important research point is not that one hardware stack is the universal answer. It is that local AI infrastructure is now credible enough for businesses to evaluate seriously.

The case for owned AI compute

Why businesses evaluate local compute

- 01

1. Data sovereignty

Some workflows involve customer records, financial documents, legal material, proprietary research, internal communications, or operational records that leaders do not want moving through third-party inference environments unless necessary.

- 02

2. Predictable cost

Cloud AI is easy to start but can become difficult to forecast as usage grows. Local infrastructure changes the profile from variable operating expense to capital investment plus maintenance and support.

- 03

3. Workflow resilience

A business that depends entirely on external AI APIs inherits availability, pricing, rate-limit, and policy changes from vendors. Local infrastructure creates a fallback layer.

- 04

4. Customization

Local deployments give teams more freedom to test open models, fine-tune specialized systems, control retrieval pipelines, and integrate inference tightly with internal tools.

- 05

5. Latency and proximity

For some workflows, especially internal agents or operational tools, reducing network dependency improves responsiveness and supports restricted environments.

A practical compute strategy framework

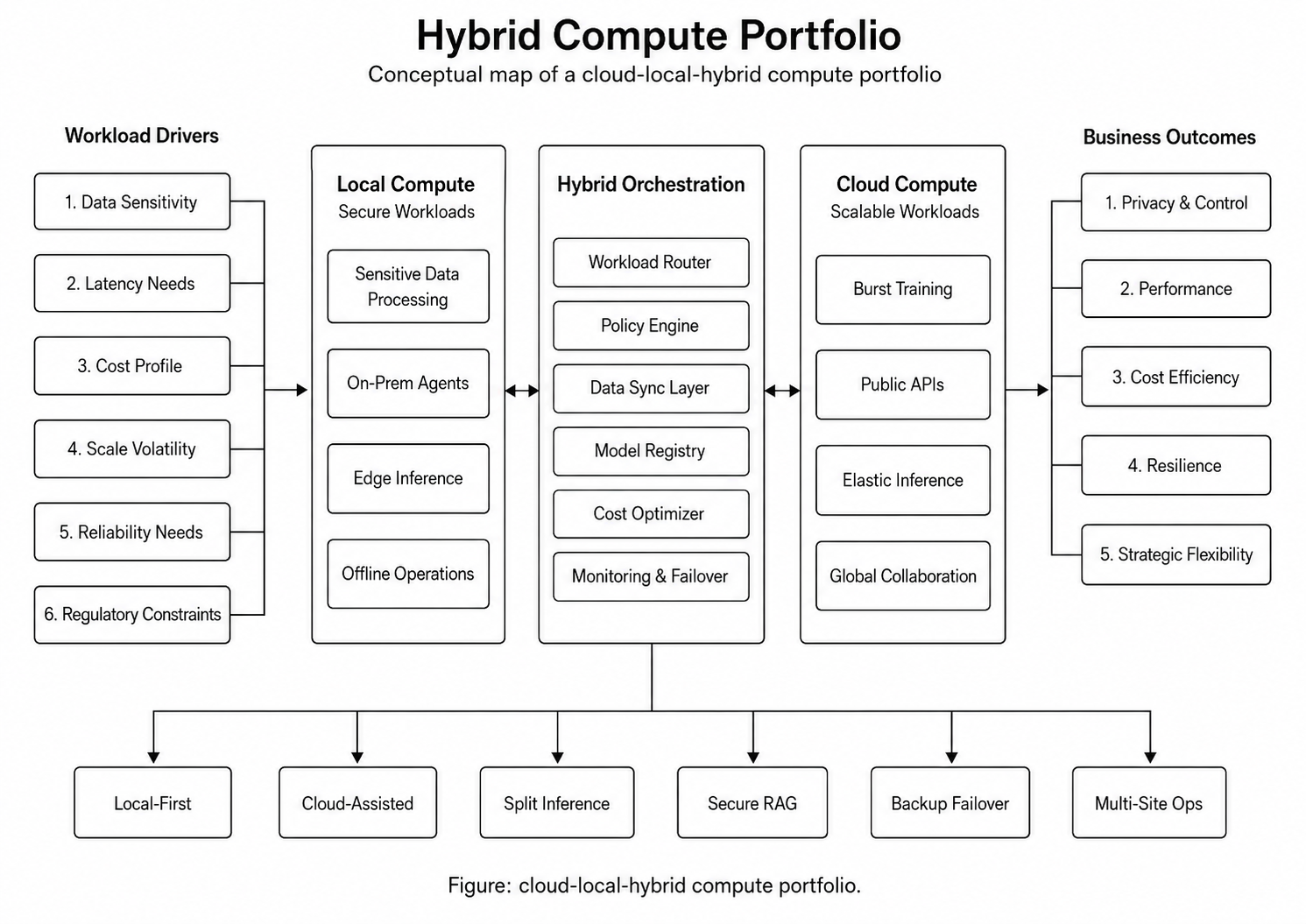

Hybrid compute portfolio

Where local compute fits best

- Internal document intelligence — Summarizing, classifying, extracting, and routing internal files without sending every document to an external model.

- Repetitive operational workflows — Intake processing, ticket triage, record normalization, report generation, and compliance-review support.

- Private RAG systems — Retrieval over internal knowledge bases, policies, contracts, research archives, technical documents, and customer records.

- Development and testing — Teams can prototype workflows locally before deciding whether production should run on cloud, local infrastructure, or hybrid paths.

- Executive and regulated environments — Leadership, legal, finance, healthcare, defense-adjacent, and research-heavy organizations may value infrastructure control even when cloud remains part of the broader stack.

Where local compute may not fit

- The business needs frontier model quality at all times.

- Usage is unpredictable and highly elastic.

- The team lacks infrastructure support.

- The workload requires uptime guarantees the company cannot maintain internally.

- The model ecosystem changes faster than the team can operate.

- The total cost of ownership is not clearly understood.

Design requirements for business-owned AI compute

- Workload classification — Define which AI workloads are local-first, cloud-first, or hybrid.

- Model governance — Maintain approved models, version history, evaluation results, and usage rules.

- Data boundary rules — Identify what data can leave the organization and what must remain local.

- Observability — Track latency, throughput, errors, cost, utilization, and user activity.

- Fallback paths — Define when local inference should escalate to stronger cloud models or human review.

- Security controls — Manage access, logging, network exposure, secrets, and system updates.

- Evaluation process — Test local model performance against business tasks before relying on it operationally.

- Lifecycle planning — Account for hardware refresh cycles, model changes, framework updates, and maintenance.

Strategic implication

AI compute is becoming a board-level infrastructure question. In the early phase of AI adoption, companies asked which model to use. In the next phase, stronger organizations will ask what compute architecture gives them the right balance of intelligence, control, cost, privacy, and resilience.

Businesses that treat AI only as a software subscription may move quickly at first, but they risk building critical workflows on infrastructure they do not control. Businesses that overcorrect into fully local systems may gain control but lose access to the strongest frontier capabilities. The advantage belongs to organizations that can design a flexible compute layer: cloud when it provides leverage, local when control matters, hybrid when the workflow requires both.

“The future of enterprise AI will not be purely cloud or purely local. It will belong to businesses that know which intelligence to rent, which infrastructure to own, and which workflows must remain portable.”